As data becomes more and more critical to business success, organizations need powerful tools to process, manage, and analyze large volumes of data from various sources. This is where Azure Synapse comes in – a cloud-based analytics solution that enables organizations to derive insights from their data in real-time. In this blog post, we will explore what Azure Synapse is, how it works, and how it can benefit your organization.

What is Azure Synapse? Azure Synapse is a cloud-based analytics service that combines data warehousing and big data analytics into a single solution. It allows organizations to ingest, prepare, and manage large amounts of data from various sources, including structured, semi-structured, and unstructured data. With Azure Synapse, organizations can process data in real-time or batch mode and then analyze it using various tools and languages.

How does Azure Synapse work? Azure Synapse is built on top of Azure Data Lake Storage Gen2 and Azure SQL Data Warehouse. It provides a unified experience for data ingestion, data preparation, and data analysis. Here is an overview of how Azure Synapse works:

Data Ingestion: Azure Synapse allows organizations to ingest data from various sources, including Azure Blob Storage, Azure Data Lake Storage Gen2, and Azure Event Hubs. It also supports a wide range of data formats, including structured data from databases, semi-structured data from sources such as JSON or XML files, and unstructured data such as text, images, and videos.

Data Preparation: After ingesting data, organizations can prepare it for analysis using various tools such as Apache Spark, SQL Server, or Power Query. Azure Synapse provides a data preparation experience that allows users to clean, transform, and join data using a familiar SQL or Python-based language.

Data Analysis: Once the data is prepared, organizations can analyze it using various tools and languages, including Azure Machine Learning, R, Python, and Power BI. Azure Synapse integrates with these tools, making it easy to build end-to-end data pipelines that can handle large-scale data processing and analytics workloads.

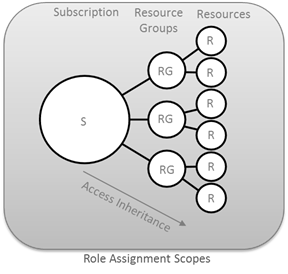

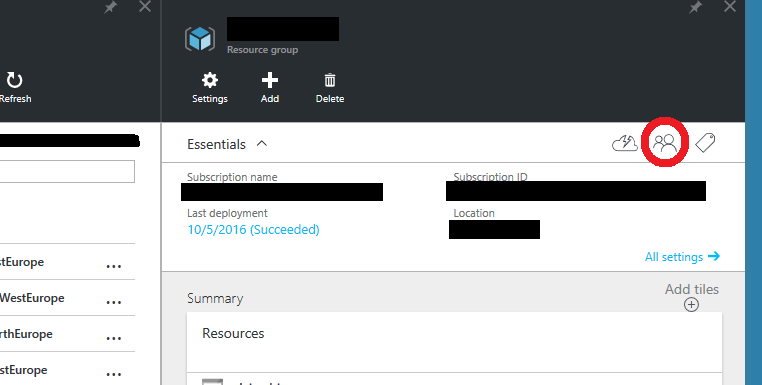

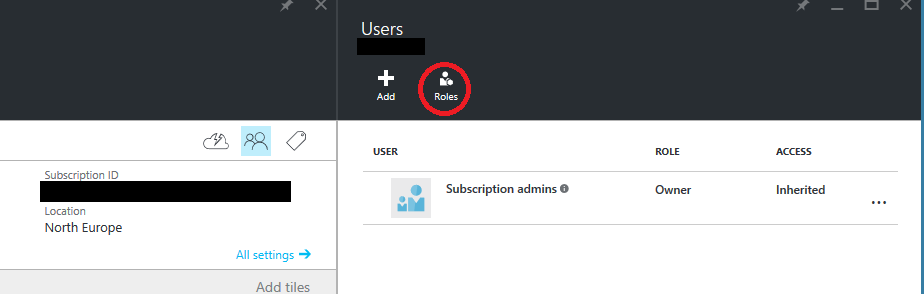

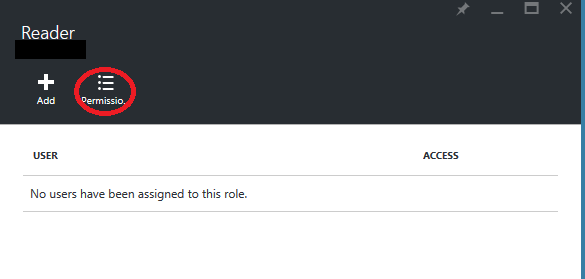

Security: Azure Synapse provides advanced security features, including data encryption at rest and in transit, role-based access control, and auditing and compliance tools. These features help organizations maintain data privacy and security, which is critical in today’s data-driven world.

Benefits of Azure Synapse: Azure Synapse provides several benefits to organizations, including:

- Scalability: With Azure Synapse, organizations can easily scale their analytics workloads to handle large volumes of data. They can pay only for the resources they need, making it a cost-effective solution.

- Integration: Azure Synapse integrates with other Azure services such as Azure Data Factory, Azure Machine Learning, and Power BI, allowing organizations to build end-to-end data pipelines.

- Real-time analytics: Azure Synapse allows organizations to perform real-time analytics on streaming data, enabling them to make decisions based on the most up-to-date information.

- Simplified data management: Azure Synapse provides a unified experience for data ingestion, preparation, and analysis, simplifying the data management process for organizations.